|

Operating systems include many heuristic algorithms designed to improve overall storage performance and throughput. Hence, this technique prevented the starvation problem for those processes which have large burst times. Other processes moved into the lower queue (the last queue). As a result, 70% of the processes in the second queue was received CPU.

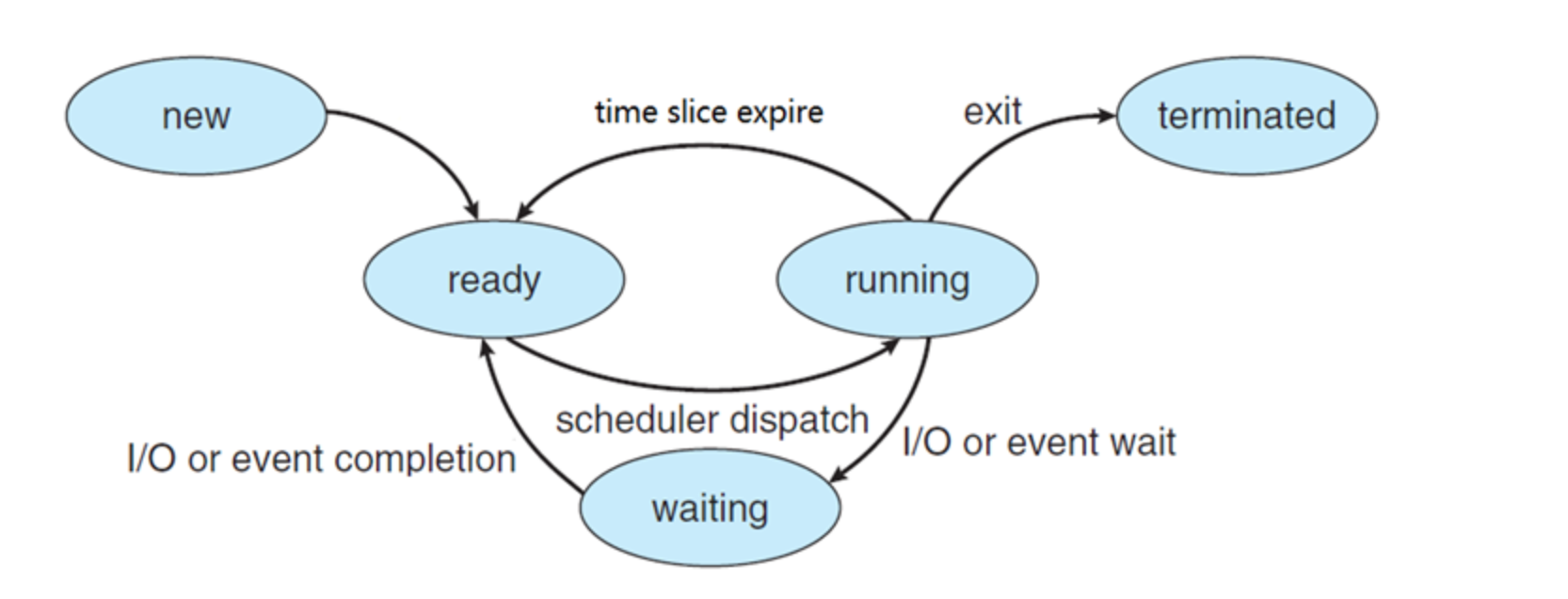

Here, we calculated the average burst time of all remaining processes and defined that burst time as a quantum for the second queue after the Shortest Job First (SJF) scheme was applied in this queue. The process that had more burst time than the defined quantum in the first queue was allowed to move into the second queue. In the first queue, we used the Round Robin (RR) algorithm with less quantum time for processes that have a short burst time. Other than that, we compared both results and observed which dynamic time quantum delivers the best result. We implemented a Multi-Level Feedback Queue (MLFQ) algorithm with static time quantum for the first queue, making an efficient scheduling algorithm of MLFQ dynamic time quantum used in the second queue. CPU scheduling algorithms come in a score of forms and any strategy has advantages and disadvantages. We need to schedule our Central Processing Units (CPUs) efficiently to handle these services. Our experiments show that KML consumes little OS resources, adds negligible latency, and yet can learn patterns that can improve I/O throughput by as much as 2.3x or 15x for the two use cases respectively - even for complex, never-before-seen, concurrently running mixed workloads on different storage devices.Ī multitasking operating system allows many programs to run at the same time. We developed a prototype KML architecture and applied it to two problems: optimal readahead and NFS read-size values. In this paper, we describe our proposed ML architecture, called KML. We propose that ML solutions become a first-class component in OSs and replace manual heuristics to optimize storage systems dynamically. Machine learning (ML) techniques promise to learn patterns, generalize from them, and enable optimal solutions that adapt to changing workloads. Storage systems are usually responsible for most latency in I/O heavy applications, so even a small overall latency improvement can be significant. Because such heuristics cannot work well for all conditions and workloads, system designers resorted to exposing numerous tunable parameters to users - essentially burdening users with continually optimizing their own storage systems and applications. We find our result interesting in the context that generally operating systems presently never make use of a program's previous execution history in their scheduling behavior. This was due to a reduction in the number of context switches needed to complete the process execution. We find that predictive scheduling could reduce TaT in the range of 1.4% to 5.8%. We experimentally find that the C'4.5 Decision Tree algorithm most effectively solved the problem. The "Waikato Environment for Knowledge Analysis" (Weka), an open source machine-learning tool is used to find the most suitable ML method to characterize our programs. In our experimentation we modify the Linux Kernel scheduler (version 2.4.20-8) to allow scheduling with customized time slices. Our objective was to discover the most important static and dynamic attributes of the processes that can help best in prediction of CPU burst times which minimize the process TaT (Turn-around-Time). Learning is done by an analysis of certain static and dynamic attributes of the processes while they are being run.

In this work we use Machine Learning (ML) techniques to learn the CPU time-slice utilization behavior of known programs in a Linux system.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed